|

Wancong (Kevin) Zhang I am a PhD student at NYU Courant, advised by Yann LeCun. My research focuses on world models, planning, and representation learning, with the goal of building agents that can learn from observation and interact with the world in an open-ended way. I am currently especially interested in hierarchical world models and planning across multiple temporal scales, where structure in the model can enable better long-horizon reasoning, more efficient control, and stronger generalization from offline or reward-free data. More broadly, I am interested in self-supervised learning for decision making, computer vision, and embodied intelligence. Email / CV / Google Scholar / Github |

|

News

|

Research (Updated 04/2026) |

|

Hierarchical Planning with Latent World Models

Wancong Zhang, Basile Terver, Artem Zholus, Soham Chitnis, Harsh Sutaria, Mido Assran, Amir Bar, Randall Balestriero, Adrien Bardes, Yann LeCun, Nicolas Ballas Website / Code arXiv, 2026 Introduces hierarchical planning across latent world models at multiple temporal scales, improving long-horizon zero-shot control while reducing planning-time compute on robotics and simulated control tasks. |

|

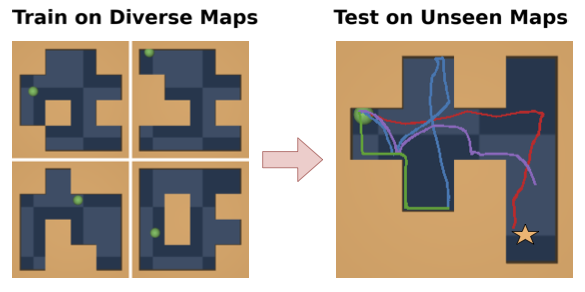

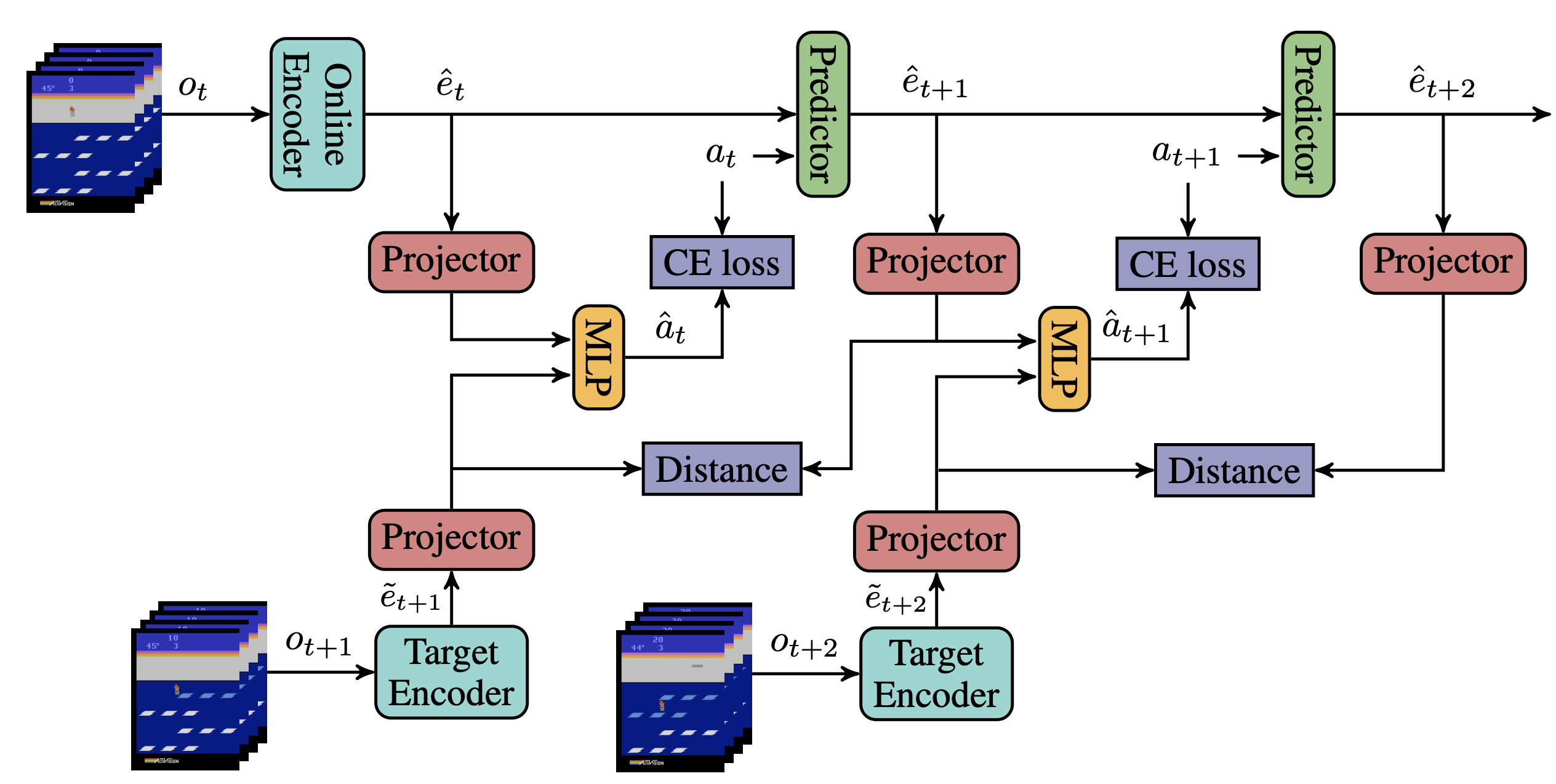

Learning from Reward-Free Offline Data: A Case for Planning with Latent

Dynamics Models

Wancong Zhang*, Vlad Sobal*, Kynghyun Cho, Randall Balestriero, Tim Rudner, Yann LeCun Website Advances in Neural Information Processing Systems (NeurIPS), 2025 Best Paper Award, ICML 2025 Workshop on Building Physically Plausible World Models This work introduces zero-shot planning using the Joint Embedding Prediction Architecture (JEPA) trained from offline trajectories, demonstrating its strengths in generalization, trajectory stitching, and data efficiency compared to traditional offline RL. |

|

Light-weight probing of unsupervised representations for Reinforcement

Learning

Wancong Zhang, Anthony GX-Chen, Vlad Sobal, Yann LeCun, Nicolas Carion Code Reinforcement Learning Conference (RLC), 2024 Presents an efficient probing benchmark to evaluate the fitness of unsupervised visual representations for reinforcement learning (RL). Applied it to systematically improve pre-existing SSL recipes for RL. |

|

Conformer-1: Robust ASR via Large-Scale Semisupervised Bootstrapping

Wancong Zhang, Luka Chkhetiani, Francis McCann Ramirez, Yash Khare, Andrea Vanzo, Michael Liang, Sergio Ramirez Martin, Gabriel Oexle, Ruben Rousbib, Taufiquzzaman Peyash, Michael Nguyen, Dillon Pulliam, Domenic Donato 2024 Showcases an industrial-scale end-to-end Automatic Speech Recognition model trained on 570k hours of speech audio data using Noisy Student. It achieves competitive word error rates against larger and more computationally expensive models. |

|

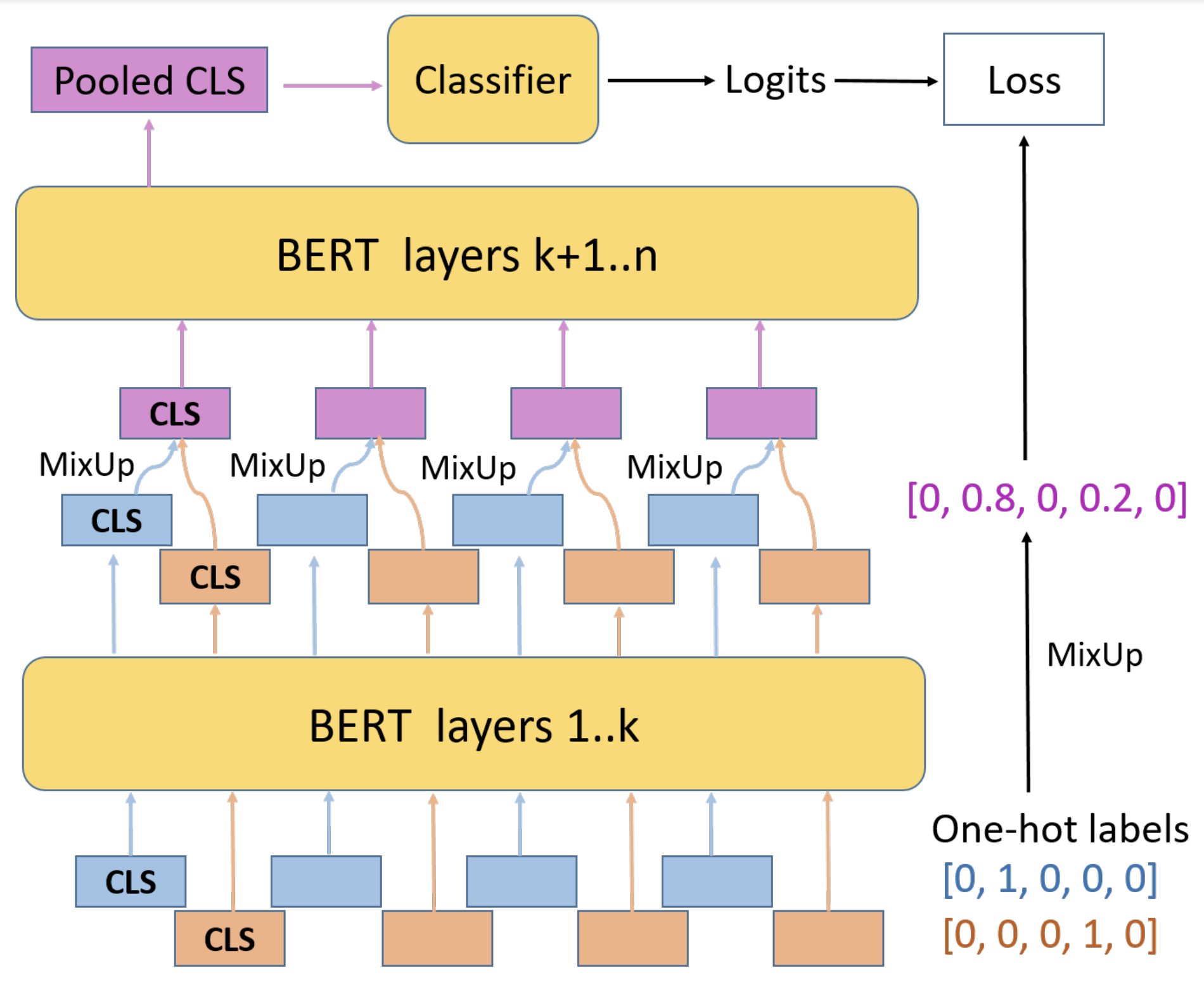

MixUp Training Leads to Reduced Overfitting and Improved Calibration for

the Transformer Architecture

Wancong Zhang, Ieshan Vaidya 2021 Adapts the computer vision data augmentation technique MixUp to the natural language domain, reducing calibration error of transformers for sentence classification by up to 50%. |

|

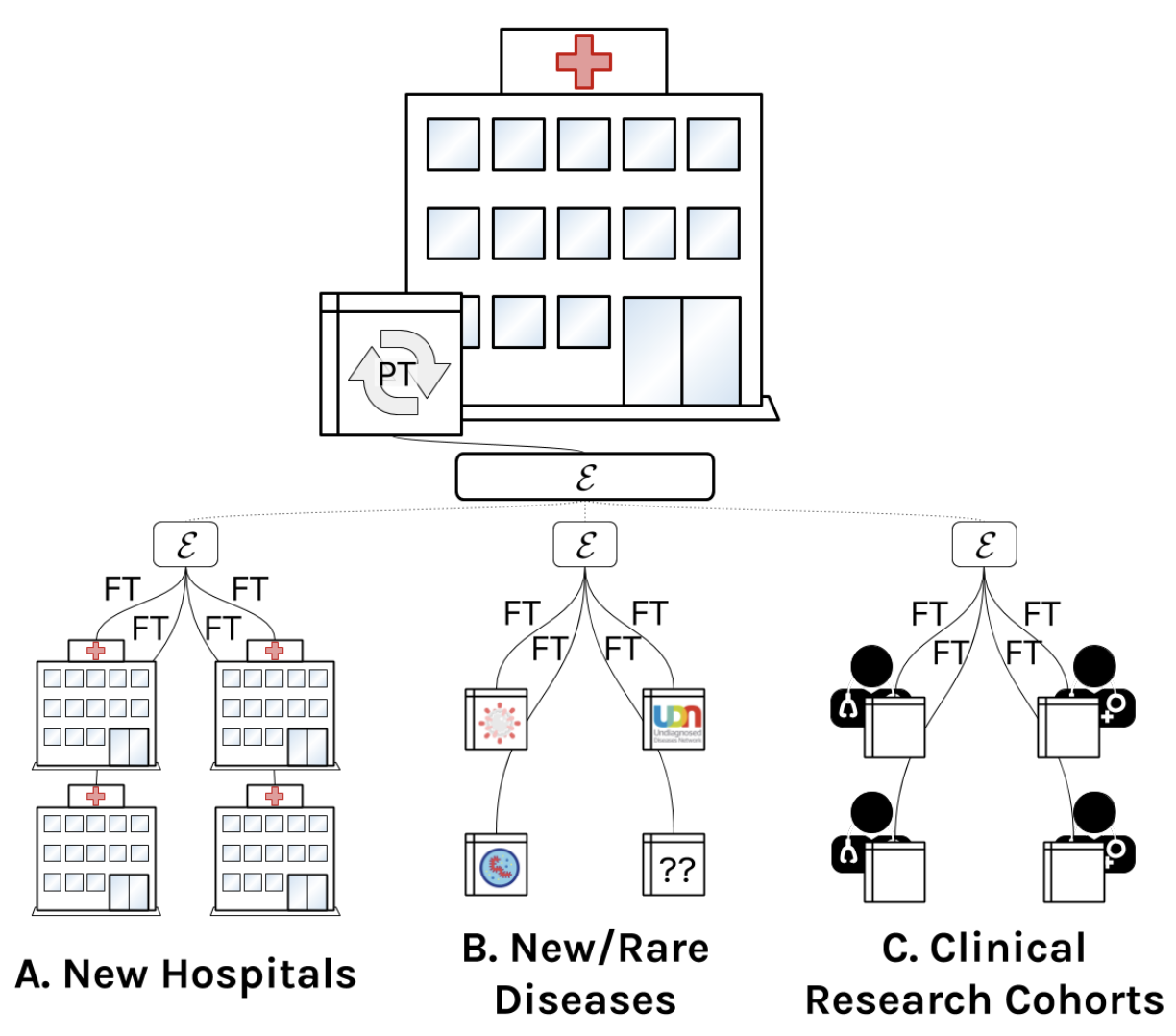

A comprehensive EHR timeseries pre-training benchmark

Matthew McDermott, Bret Nestor, Evan Kim, Wancong Zhang, Anna Goldenberg, Peter Szolovitz, Marzyeh Ghassemi Conference on Health, Inference, and Learning (CHIL), 2021 Establishes a pre-training benchmark protocol for electronic health record (EHR) data. |

Teaching |

|

Deep Learning (DS-GA 1008), Fall 2024

|